See It In Action

Clean, intuitive interface for tool generation and management.

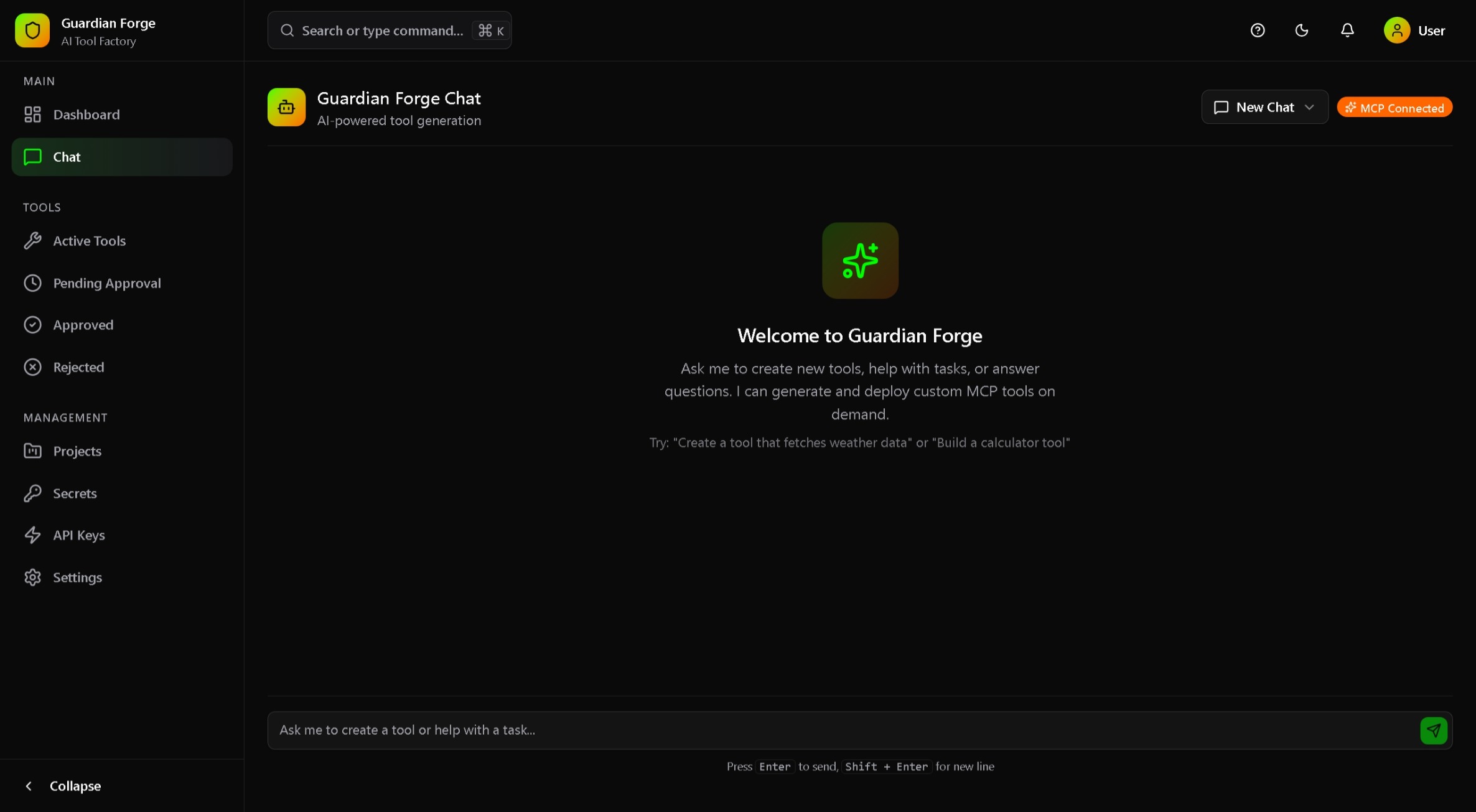

Chat Interface

Request tools in natural language. MCP-connected for seamless AI integration.

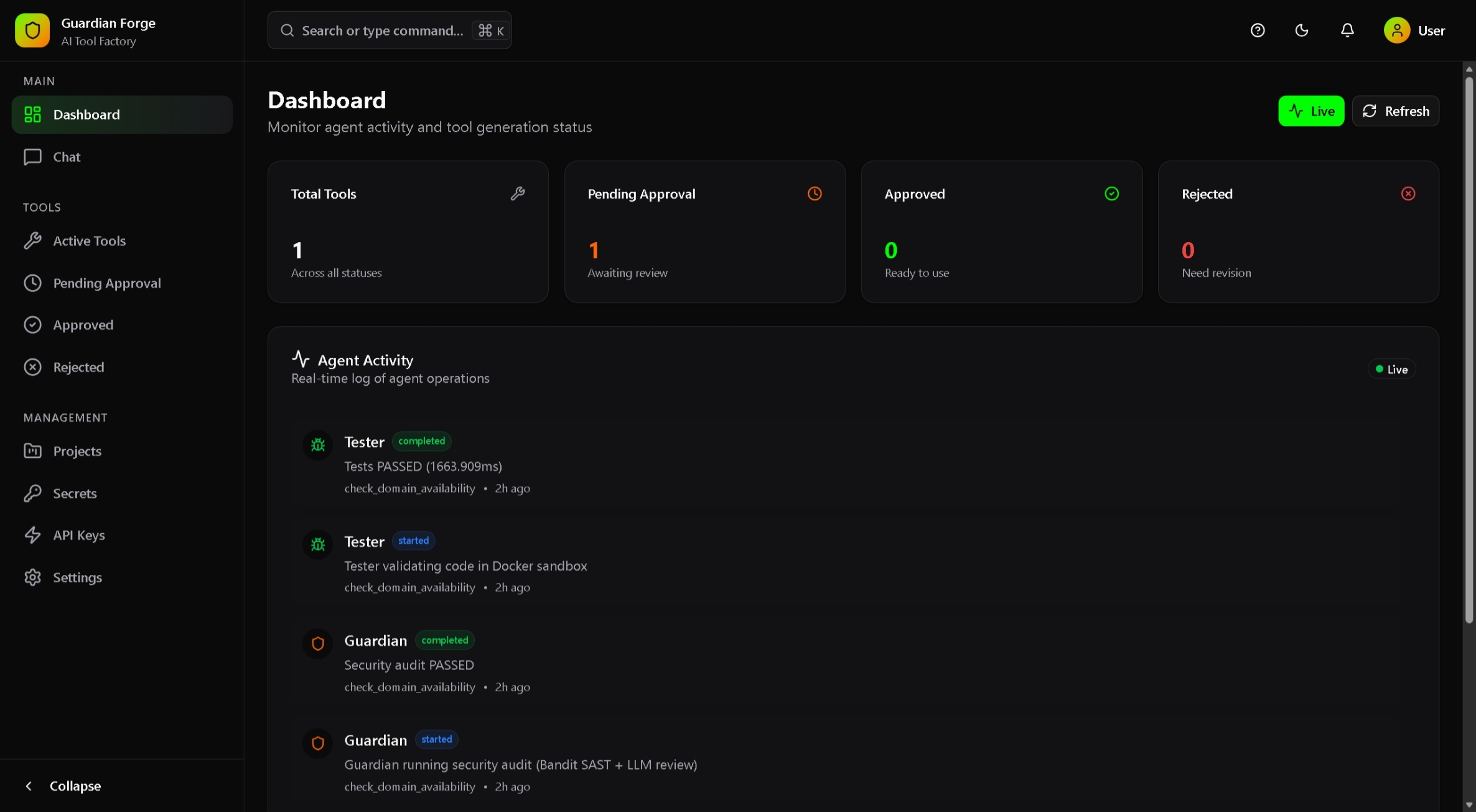

Live Dashboard

Real-time agent activity. Watch Engineer, Guardian, and Tester agents work.